AI, Financial Crime, and the Dilemma of Responsibility

New forms of AI can think for themselves. Who should be held responsible when they decide to commit financial crime?

I’m fortunate to live on a beautiful old street in Amsterdam, not far from the city’s tree-lined canals. But in recent weeks, a crisis has arisen, one that has occupied both neighbors and passersby: dog poo. Someone is repeatedly failing to clean-up after their pup.1 And apparently this is a national problem. The Netherlands maintains statistics on “hondenpoep,” finding in 2021 that it was the number one neighborhood nuisance.2 The nuisance is so severe, in fact, that one politician has suggested collecting DNA samples to identify delinquent owners.3 This special task force has yet to come to fruition. But owners are now subject to a €150 “hufterboete,” a wonderful Dutch term which translates to “scumbag fine.”

Naturally, we hold owners responsible for these offenses — not their dogs. While it would be fun to dress up a Golden Retriever in a miniature suite and cross-examine their actions in court, we generally don’t consider dogs independent legal persons responsible their actions.4 They are, in the cold eyes of the law, mere “property.”5 Therefore, when your neighbor’s Shih Tzu pees on your flowers or bites your uncle’s hand, there is little confusion about who is liable.

But this distinction is not always so clear. The world is now inundated with machines, from Roombas to self-guided missiles, capable of exercising discretion and operating with varying levels of autonomy. Artificial intelligence represents the zenith of these innovations, facilitating technologies that replicate — and in some cases surpass — the cognitive functions of the human brain.6

These developments have raised complicated questions about how liability (in both the civil and criminal sense) should work when machines can make independent decisions that impose harm on others.7 And these complications are now at the center of one of the most fascinating legal debates in finance. Namely: who should be held responsible if AI commits financial crime?

Enter the Robots

In October 2023, Bloomberg profiled Neo IVY Capital Management, a new hedge fund focused on the use of AI technology.8 Neo’s website summarizes their overarching investment thesis:

A good trader is characterized by making good decisions with all the information from the market, and it usually takes years to learn. Can a computer model achieve the same feat, namely, to digest a massive amount of input data, and then make decisions to maximize the future reward? With the recent advances in deep learning, it is now possible to train a neural network based model with reinforcement learning.

The primary innovation alluded to here is “reinforcement learning.” This is a sub-type of AI in which agents (AI programs) interact with their environment, learning through trial and error how to solve sequential decision-making problems to achieve some objective.9 Humans set the objective. But it is the AI program which autonomously explores which intermediate steps it should take to achieve it.10 Neo also alludes to the concept of “deep learning.” This generally refers to AI programs powered by artificial neural networks. One application of this technology is to categorize data and recognize patterns more effectively than humans. For example, scientists are exploring how AI programs using “deep learning” may be able to learn from CT scans how to more effectively detect early-stage cancer.11

What hedge funds like Neo hope is that such methods can be used to identify and exploit similar patterns in financial markets. Through reinforced learning, AI should hypothetically be able to autonomously engage in trading decisions that maximize returns or, equally important, hedge against risk.12

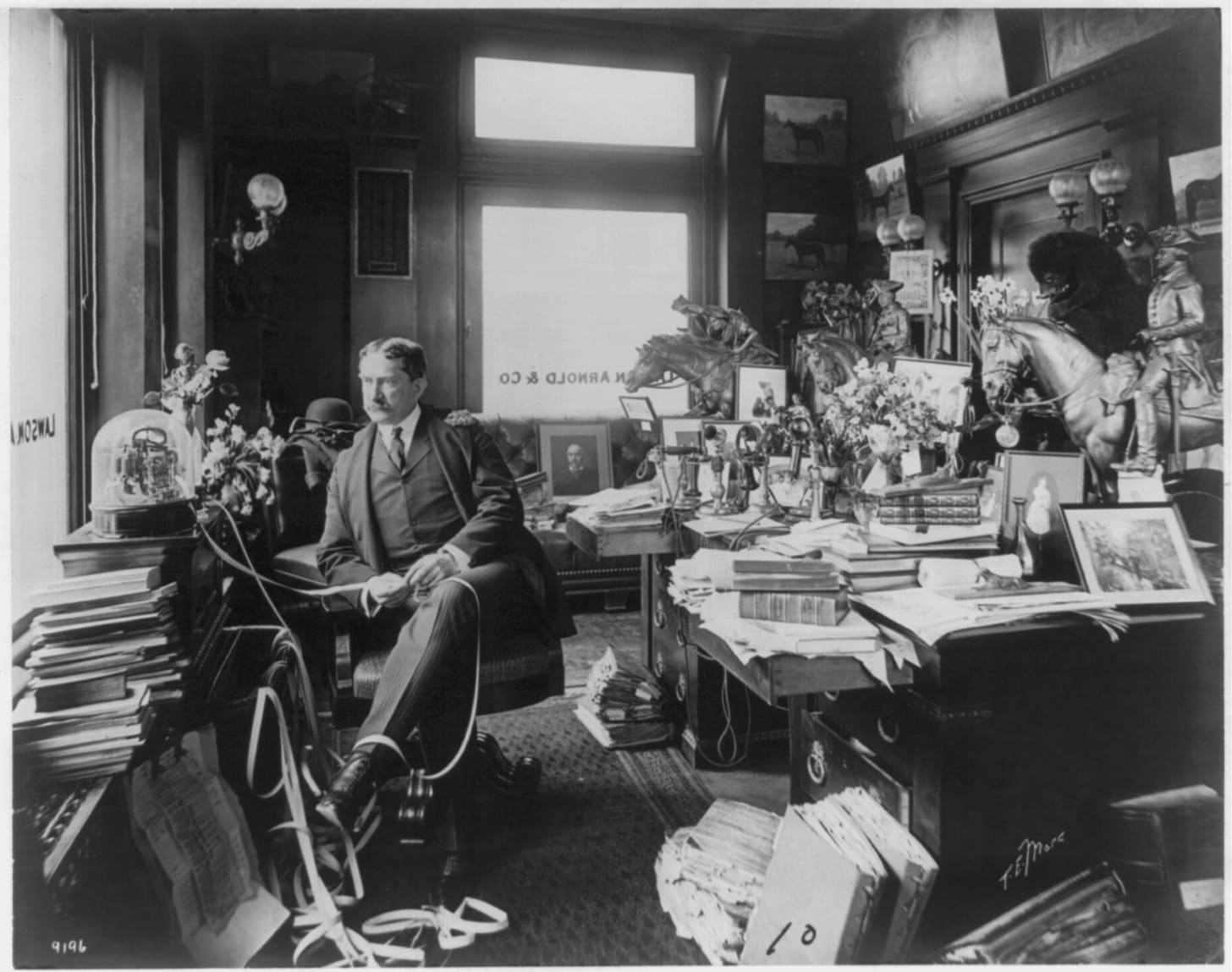

This is a novel innovation. But finance has long been experimenting with the use of computers to replicate human intelligence. In the 1980s, for example, researchers began using computer algorithms to predict stock price movements.13 And if we think more broadly about automation (of which AI is arguably one end of the spectrum14), the relationship goes back further. In the 19th century, for example, the creation of the ticker tape — which electronically distributed stock prices to anyone with the right machine — revolutionized trading. Brokers, such as the gentleman pictured below, were now able to monitor the market without having to leave the comfort of their (statue-adorned) offices.15

But the most disruptive innovation to financial markets — and the one on which reinforced learning most directly builds — is algorithmic trading. There are differences of opinion on what exactly this term means. But the EU’s definition is informative: “…trading in financial instruments where a computer algorithm automatically determines individual parameters of orders…” (emphasis added).16

These semi-autonomous algorithms have largely replaced human traders.17 And they increasingly operate at incredible speeds in what is referred to as High-Frequency Trading (HFT). The world’s most sophisticated hedge funds have been engaged in a decades-long race to trade at ever-faster speeds, now measuring their execution times in nanoseconds — billionths of a second.18 Because humans cannot make conscious decisions at these speeds, HFT necessitates allowing algorithms to engage in some level of independent discretion. Thus the industry has been using AI long before ChatGPT captured the world’s attention.

But there is a problem. AI programs are rational maximizers in the purest economic sense, with no concerns about morality or ethics.19 If we ask them to achieve some objective, ‘they’ may conclude that the most rational means by which to do so is to engage in financial crime. This could take traditional forms of market manipulation, such as spoofing and quote stuffing.20 But with deep reinforced learning, it is plausible that AI-powered trading agents would find new ways to manipulate prices, engage in collusion, and evade existing regulations.21

If AI programs independently decide to engage in financial crime, who is responsible for their actions? Establishing liability in “normal” human-to-human circumstances is already a hugely complex topic. Throwing AI into the mix is a bit like trying to deep-fry a frozen turkey.22 As a new wave of scholarship demonstrates, it poses unique challenges that existing laws may be ill-equipped to handle.

Enter the Lawyers

In many jurisdictions, prosecuting market manipulation requires establishing that the defendant acted with intent.23 This is why cases so often feature embarrassing emails and texts. In 2017, for example, the SEC filed a complaint against a Ukrainian trading firm and its employees for engaging in a form of market manipulation referred to as “layering.” The emails are brutal:

My name is Nathan and I received your contact information from Serge…He told me that you had a group of traders and were interested in setting up some accounts for layering…this is definitely something I can help you with…I am one of the very few places that still allow layering…It costs a lot of money for legal expenses to keep this going these days as no other firms allow this.24

But the concept of intent — or what lawyers refer to as scienter — is about the mental state of a human.25 Existing laws do not generally consider machines to be legal persons capable of having a mental state. This has led some legal scholars to question whether it would even be possible, under existing statutes, to demonstrate that AI programs intended to manipulate markets. Gregory Scopino summarizes the issue with respect to trading algorithms:

…what if it is not just difficult but impossible to prove scienter or any other culpable mental state— e.g., specific intent —in a particular case because, in fact, no human actually had intended the [algorithmic trading system] in question to do anything improper…Under these circumstances, causes of action that have a scienter or culpable mental state requirement…most likely would be ineffective.

The difficulty of establishing intent is heightened when we talk about AI programs using deep reinforced learning. As noted above, humans set the objective (which could be as simple as: maximize returns), but it is the AI program which determines the necessary intermediate steps. And it is often extremely difficult, if not impossible, to determine how AI agents make such determinations.26 This is the infamous “black box” problem. It implies that, even if we were to determine that AI programs do have a mental state that could formulate intent to engage in crime, we would not have any evidence of that mental state. AI programs do not, in other words, make the mistake of writing self-incriminating emails.

From Dogs to Owners

But even if they could, we would not be talking about holding AI programs responsible for their actions. These programs do not (yet) have independent legal personalities or personal assets that could be seized. Nor do we have electronic prisons where we can keep criminal AI programs behind bars.

The question, instead, is whether we can hold the owners of these programs (and/or individual programmers) responsible for actions that AI independently decides to undertake. One might assume the situation is no different from dogs. Allowing AI programs to participate in markets is a bit like letting your Rottweiler off the leash in a public park.27 If they bite someone, surely it’s your fault?

This logic can be roughly equated to the concept of strict liability. Strict liability is a standard which maintains that the mere existence of harm is sufficient to assign blame (regardless of intent). But applying strict liability is not always practical. Professor Yesha Yadav explores this problem in her influential article, “The Failure of Liability in Modern Markets.” Yadav observes that trading algorithms, despite being preprogrammed by their creators, must make independent decisions in unpredictable real-world environments at speeds which humans cannot perceive. Thus algorithms may engage in harmful actions (either purposefully or accidentally) that neither humans nor companies can fully anticipate.

Applying strict liability here would, therefore, expose virtually every firm to constant (and potentially very expensive) sanctions.28 Regulators would not have the resources to enforce this equally.29 And it would dis-incentivize the use of algorithms altogether by raising the regulatory risks to unsustainable levels.

Another group of scholars carry this conversation forward to AI programs employing deep reinforced learning. They note that the black box issue makes it extremely hard to establish causal links between AI program’s actions and their alleged harm (e.g., in the context of assessing damages in a civil suit). And because these processes are unknowable to programmers, it is difficult to determine if humans or companies could have reasonably “foreseen” the harm and/or be deemed “negligent” (among other complications).30

But despite the difficulties of applying standard forms of liability, we wouldn’t want to let companies off the hook entirely. This would create a perverse incentive to release AI programs into financial markets with reckless abandon.31 Thus we are faced with a dilemma of responsibility. The logical next question, then, is how we can design market rules in a manner that affords sufficient accountability without suppressing the use of innovative technologies.

Enter the Regulators

On February 13th, SEC Chairman Gary Gensler delivered a speech on AI in financial markets at Yale Law School. Gensler begins with an anecdote which, in writing, sort of sounds like it was written by ChatGPT:

Scarlett Johansson played a virtual assistant, Samantha, in the 2013 movie Her. The movie follows the love affair between Samantha and Theodore, a human played by Joaquin Phoenix. Late in the movie, Theodore is shaken when he gets an error message — “Operating System Not Found.” Upon Samantha’s return, he asks if she’s interacting with others. Yes, she responds, with 8,316 others. Plaintively, Theodore asks: You only love me, right? Samantha says she’s in love with 641 others. Shortly thereafter, she goes offline for good.

OK! Gensler proceeds to address the liability complications posed by self-learning algorithms. He notes, understandably, that many of these questions will have to be decided by courts rather than the SEC.

What is less understandable is his lack of urgency. Gensler notes, “Right now, though, the opportunities for deception or manipulation most likely fall in the programmable and predictable harm categories rather than being truly unpredictable.” While it is true that deep reinforced learning remains in its infancy, trading algorithms have, for many years now, featured unpredictable risks. Will we look back at these quotes as another missed opportunity for the SEC to engage in proactive regulation?

Other regulators appear more concerned. The CFTC has sought public comment on, among other things, how AI might increase the risk of manipulation.32 And last year the Netherlands’ Authority for the Financial Markets issued a report on this topic. The AFM surveyed Dutch trading firms, finding that machine learning is already used in 80-100% of their algorithms.33 These firms are not yet employing reinforced learning but expressed strong interest in the possibilities. The AFM warns that, once such programs are deployed, there is no reason to assume AI agents will not engage in market manipulation to satisfy their goals.34

We appear, in other words, to be standing at a precipice. Various solutions have been proposed to address the risks of AI, such as strengthening internal controls to ensure humans are kept “in-the-loop.”35 And scientists are working on how to improve the explainability of AI programs, which would help simplify some of the liability issues posed by the black box problem.36 But it seems unlikely that such solutions will keep pace with the fields’ rapid commercial advances. The fact of the matter is that the robotic barbarians are already at the gate. And it is only a matter of time before someone lets them in.

Could there be multiple perpetrators? Without going into too much detail, let’s just say the evidence supports the single-bullet theory.

In the category of physical deterioration (only bested by fast driving and parking problems). Happy reading.

De Telegraaf, Poop dumpers traced with DNA test.

There has, however, been debates on assigning legal personhood to animals for the purpose of their protection (e.g., in the context of animal testing). And in the US, Illinois became the first state to require judges to consider the well-being of pets when settling divorce proceeding. See Suzanne Monyak’s New Republic piece, “When the Law Recognizes Animals as People.”

The precise terminology surely varies by jurisdiction and legal system, this non-expert imagines.

Siemens et al. provide a nice readable summary of the current state of AI in “Human and artificial cognition.”

Karni Chagal-Feferkorn provides a helpful historical overview with respect to product liability in “Am I An Algorithm or a Product: When Products Liability Should Apply to Algorithmic Decision-Makers.” See also Mark A. Chinen’s article, “The Co-Evolution of Autonomous Machines and Legal Responsibility.”

Justine Lee, “Can AI Beat the Market? Wall Street Is Desperate to Try.”

Paraphrasing from Yuxi Li’s paper, “Deep Reinforcement Learning: An Overview.”

LeCun et al., “Deep learning.”

For a review, see van der Kamp et al., “Artificial Intelligence in Pediatric Pathology: The Extinction of a Medical Profession or the Key to a Bright Future?”

See, e.g., Briola et al., “Deep Reinforcement Learning for Active High Frequency Trading.” Deep reinforcement learning requires, however, tremendous computational power and thus remains impractical for most market participants.

Sydney Swaine-Simon and Abhishek Gupta, “The History of AI in Finance.”

We lack an agreed definition of AI (see Pei Wang’s “On Defining Artificial Intelligence”). Scholars have also noted that automation is a spectrum of innovations which is more accurately measured on continuous scales (see Karni A. Chagal-Feferkorn’s “Am I an Algorithm or a Product? When Products Liability Should Apply to Algorithmic Decision-Makers”).

See John Handel’s wonderfully informative history, “The Material Politics of Finance: The Ticker Tape and the London Stock Exchange, 1860s–1890s.”

Derived from the EU’s Markets in Financial Instruments Directive II (MiFID II).

I’ve co-authored two book chapters on this topic in Global Algorithmic Capital Markets and an Oxford Handbook. For a fuller treatment, see Dark Pools, Flash Boys, and Darkness by Design.

Though one set of scholars has explored how AI might be infused with ethical considerations important to humans. It is plausible that an AI trading program could be provided with instructions to maximize returns while abiding by regulatory standards. But those standards are often subject to interpretation. If grey areas exist, would AI find new ways to push the boundaries and, in turn, shape how we understand the rules?

Spoofing and layering are two forms of manipulation in which traders place and quickly cancel orders to buy/sell, giving others a false sense of liquidity and market direction. Quote stuffing refers placing and cancelling enormous volumes of quotes to trade to disrupt trading systems and other market participants’ strategies.

Azzutti et al. write, “Significantly, order-based algorithmic strategies are somehow constrained by venues’ rules limiting the “orders to transactions ratio” (OTR), aimed at disadvantaging non bona fide trading orders. However, by algorithmic learning, autonomous AI traders could find ways to optimize manipulation strategies within OTR statutory limits. Indeed, recent in-lab market simulations involving “reinforcement learning” agents provide some first evidence…” (p. 99).

In 2000, the International Organization of Securities Commissions published a report highlighting how these standards vary by jurisdiction.

This is true even for corporate cases. As Gregory Scopino writes, “As lawsuits against business entities must prove scienter in the employee or employees involved in the culpable act or acts. That is, to determine corporate scienter, a court will look to the mental state of the corporate official or officials who made the allegedly improper actions—uttering false statements or making misrepresentations for instance—with the idea that a corporation can only know what is known by the persons acting on its behalf. Therefore, the mental state requirement of any given cause of action ultimately must either be met—or not—in the mind of some specific human or humans” (p. 251).

As Gina-Gail S. Fletcher and Michelle M. Le phrase it, “How the algorithm determines its output is often unknown to the programmer, thereby rendering the decision-making process opaque even to the algorithm’s coders” (pp. 301-2).

Sorry, I know Rottweilers can be sweet. But there’s a reputation.

As Yadav puts it, “Predictive programming implies an endemic propensity for ad hoc, unpredictable error, meaning that strict liability can give rise to widespread breaches…Also, with risks capable of spreading rapidly across many markets, the cost of harms can far exceed the amount that a single trader might be able to bear” (pp. 1039-40).

Ibid.

Azzutti et al., “Machine Learning, Market Manipulation and Collusion on Capital Markets: Why the "Black Box" Matters” (pp. 120-1).

Yadav discusses this as an alternative to strict liability: a standard which requires evidence of intent or negligence. But: “…an intent-based standard encourages risk taking by letting traders off the hook for risky but non-malicious behavior” (p. 1040).

AFM, Machine Learning in Algorithmic Trading (p. 12).

Ibid, p. 16.

Zetzsche et al., “Artificial Intelligence in Finance: Putting the Human in the Loop.”

Didn’t Isaac Asimov already define the Prime Objective? Compliance guardrails are also presumably programmable to modify objectives like “maximize returns,” no? Great piece, Miles.